Configuring a Compute Backend

Note about who can administer Container Service features

As of Container Service version 3.7.0+ and XNAT version 1.9.2+, Site Admin can no longer administer features of Container Service. A user with Container Manager Role and Privileged Role can administer the Container Service. These roles can be additionally granted to the Site Admin to be able to administer Container Service.

All Container Service site configuration screens are available via the top navigation under Administer > Plugin Settings

Preamble: Understanding the XNAT Docker Library

Originally we used the spotify/docker-client library to wrap the docker remote API in java method calls. They stopped updating that and put out their final release v6.1.1 in 2016.

We switched the Container Service to use a fork of that client, dmandalidis/docker-client in CS version 3.0.0. Given that this was a fork of the client we already used, it was a simple drop-in replacement with no changes needed.

But that library maintainer did continue to make changes. In 2023 they released a major version upgrade, v7.0.0, which dropped support for Java 8. That is the version of Java we use in XNAT (at time of writing) so this change meant we weren't able to update our version of this library. That was fine for a while...

...Until version 25 of the docker engine, in which they made an API change which caused an error in the version we used of docker-client. The library (presumably) fixed their issue but we weren't able to use that fix because our version of the library was frozen by their decision to drop Java 8 support.

This forced us to switch our library from docker-client to docker-java. This was not a drop-in replacement, and did require a migration. All the same docker API endpoints were supported in a 1:1 replacement—which took a little effort but was straightforward—except for one. The docker-java library did not support requesting GenericResources on a swarm service, which is the mechanism by which we allow commands to specify that they need a GPU. We opened a ticket reporting that lack of support (https://github.com/docker-java/docker-java/issues/2320 ), but at time of writing there has been no response. I created a fork (https://github.com/johnflavin/docker-java ) and fixed the issue myself (https://github.com/docker-java/docker-java/pull/2327 ), but at time of writing that also has no response. I built a custom version of docker-java 3.4.0.1 and pushed that to the XNAT artifactory (ext-release-local/com/github/docker-java).

Long story short, as of CS version 3.5.0 we depend on docker-java version 3.4.0.1 for our docker (and swarm) API support.

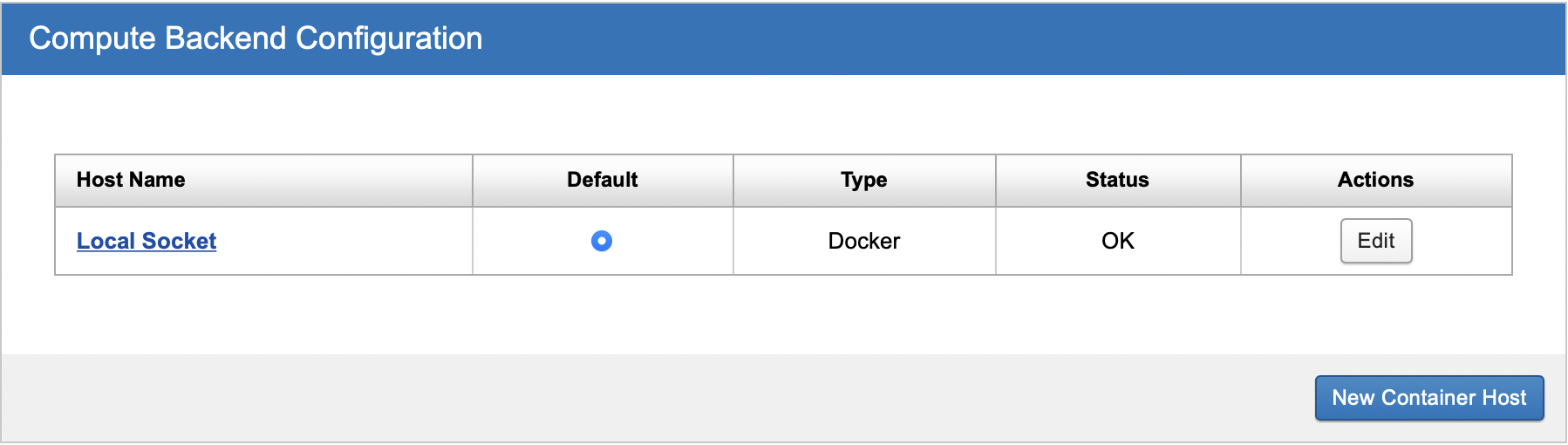

Connecting XNAT with your Compute Backend

XNAT needs to know how to communicate with your compute backend over HTTP. By default, the Container Service is configured to communicate with a local Docker server compute backend, and listens for connections on a UNIX socket. If docker is listening on the default socket, and XNAT can access that socket, then you won't need to change anything. The default container service settings correspond to the default docker settings.

If your Docker server is set up to listen on the default socket but your XNAT cannot communicate with Docker, you may need to add the "xnat" system user to the Docker group.

If your Docker server is on a separate machine from your XNAT server, and XNAT has no access to the Docker socket, then you need to enable communication on both sides.

Enable docker to listen for TCP connections. These can be either secured (HTTPS) or unsecured (HTTP).

Set up a new docker server in the container service administration page. (See screenshot above). Click the "New Container Host" button. Enter a user-friendly name for the server, the URL at which your docker server is listening, and (if you configured docker to listen over HTTPS) the path to the certificate file used to secure communications.

Docker HTTP

We recommend only allowing access to the docker server through the socket or HTTPS. Do not enable HTTP unless...

Your docker server is behind a firewall. Enabling HTTP with no firewall would allow anyone on the internet to execute arbitrary code on your docker server.

You are careful about the containers you allow XNAT users to execute. In particular, be very careful about allowing users to execute custom scripts. The containers run inside your firewall, which means a container could use the docker HTTP API to start new containers. This would allow users to completely control the docker server and view any XNAT data, bypassing the XNAT security model.

Enabling access to docker only through the socket or HTTPS does not create these problems.

Editing or Setting Up a New Compute Backend

Currently the Container Service only supports having one Compute Backend. There is no practical difference between "Edit" and "New Container Host" since either will replace the existing Compute Backend settings.

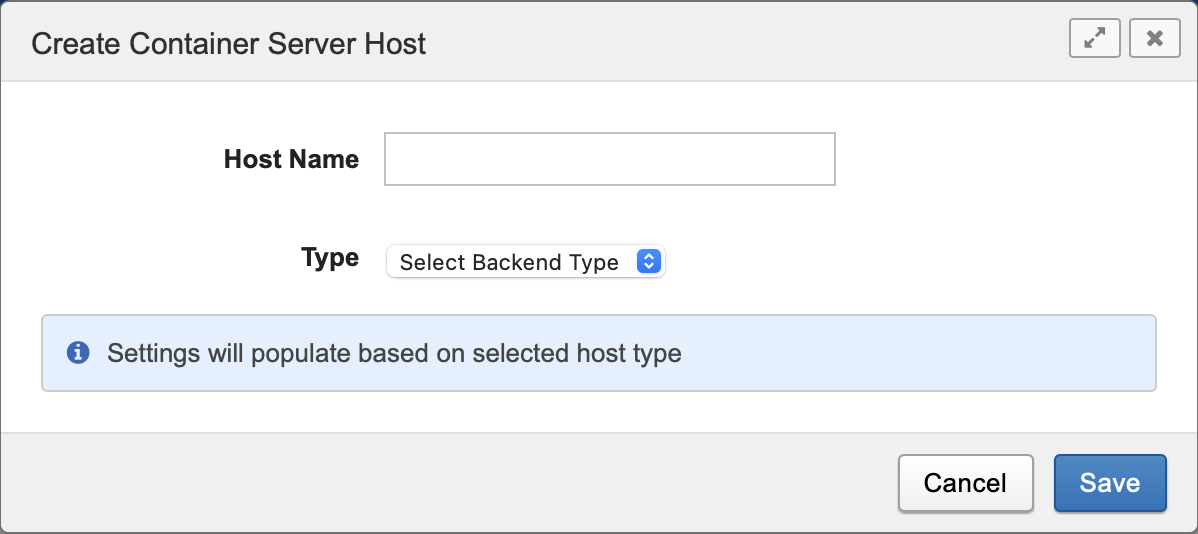

Compute Backends support a variety of configuration options, most notably allowing administrators to configure the type of Backend, such as Docker Swarm or Kubernetes. To get started, click in the UI to Edit an existing Compute Backend or create a new one. You will see a dialog like this:

Initial Settings

Setting | Description |

|---|---|

Host Name | This is a text field that serves as a label for your host configuration |

Type | One of Docker, Docker Swarm, or Kubernetes. |

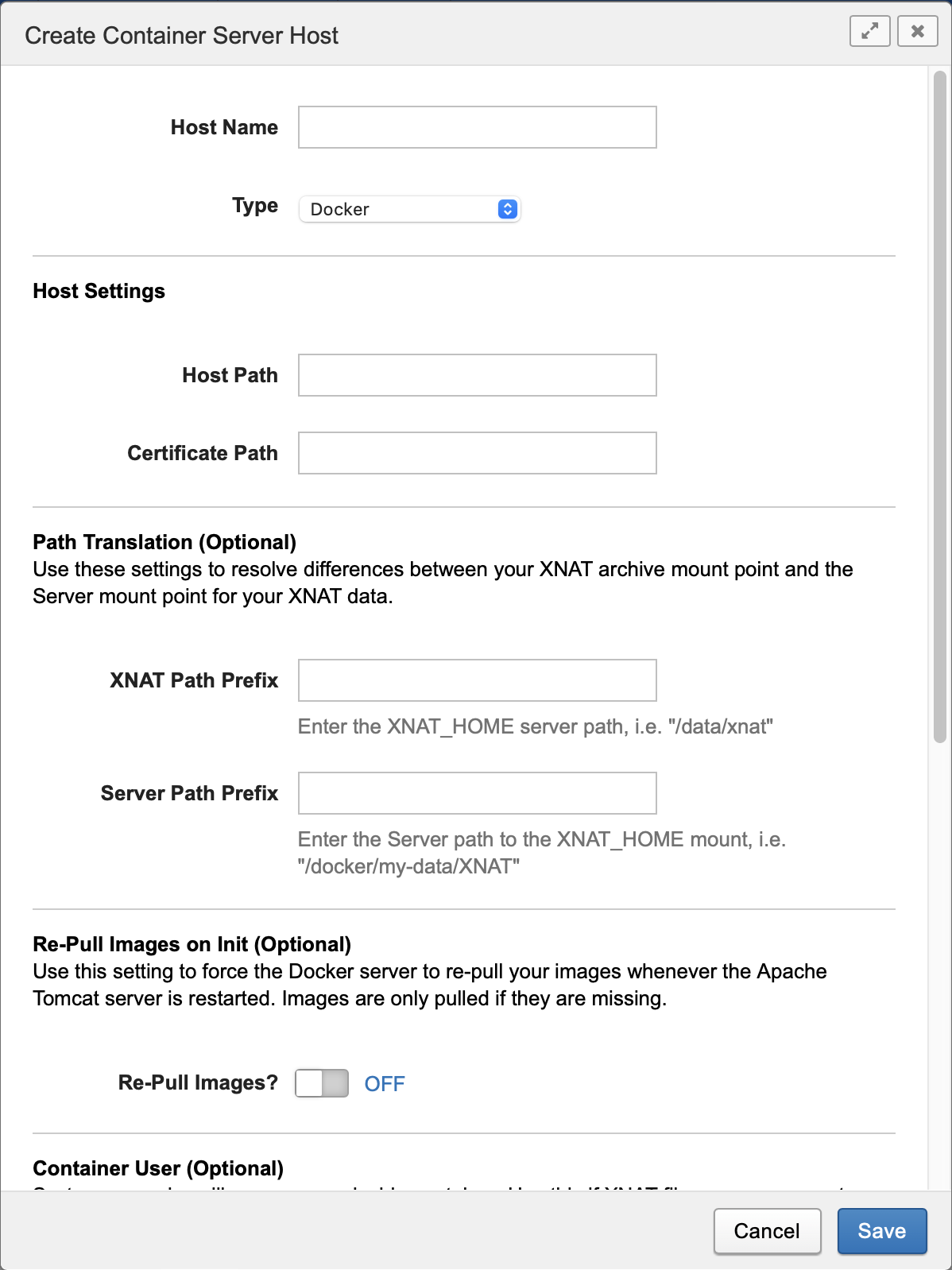

Once you select a Type, more configuration options will appear that are relevant to that Compute Backend type. For example, if you select "Docker" for the type, you will see these options:

Compute Backend Types

Docker is a service for launching and managing containers. It is a widespread technology and easy to install in many environments.

Docker Swarm is Docker's term for enabling batches of containers to be managed in a queue and farmed out across multiple processing nodes. If you already have a Docker server configured it is easy to enable "Swarm mode". Refer to the Docker Swarm Mode documentation for details on how to configure this mode in your Docker Server.

Kubernetes (https://kubernetes.io) is an alternative container management technology to Docker Swarm. Unlike Docker Swarm, which is very similar to standalone Docker and shares many API concepts with it, Kubernetes is built around an entirely different API model. It is widely used in production compute environments, and many cloud platforms provide robust Kubernetes implementations, for instance AWS EKS. Refer to the Setting up Container Service on Kubernetes documentation for more detail on how to configure this mode.

The default Compute Backend for the Container Service is a standalone Docker configuration. This is the easiest Compute Backend to get installed and running. However, we strongly recommend setting up one of the other supported Compute Backend server types, Docker Swarm or Kubernetes, and configuring the Container Service to use that instead. These other backend types are much better able to handle running and queueing multiple container launches. If you expect to launch containers in bulk or via an Automation then your XNAT system will be more stable using Docker Swarm or Kubernetes as your Compute Backend than standalone Docker.

Host Settings

All the Compute Backend types allow you to set parameters for how and where to connect to the Compute Backend server.

Currently the Container Service does not use the Host Settings section when Kubernetes is selected. Instead, the Container Service reads the connection information for a Kubernetes backend from a KubeConfig file which you must provide. See Setting up Container Service on Kubernetes for more information.

Setting | Description |

|---|---|

URL | This is the HTTPS or UNIX path where your Compute Backend server is configured to listen for new connections. See Processing URL for more detail. |

Certificate Path | This is the local file path to your server's SSL certificate, if needed to authenticate the above URL |

Path Translation

In order to execute processing on your XNAT resource files, you need to be able to mount the XNAT Archive within the context of a running container. In most deployments, your XNAT server and your Compute server will be running side by side, or through an HTTPS connection, and this mount process is fairly straightforward. However, if your XNAT server is itself running inside a Docker container – for example, using the xnat-docker-compose build – then the relationship between the XNAT archive and the Compute server becomes more complicated, since it is already mounted.

The "path translation" configuration setting was created to resolve these differences. See Path Translation for more details on how (and when) to fill out these fields.

Setting | Description |

|---|---|

XNAT Path Prefix | The path to your XNAT_HOME directory as it is visible to XNAT on the XNAT server, i.e. |

Server Path Prefix | The path where the XNAT_HOME directory is mounted on your compute node(s), i.e. |

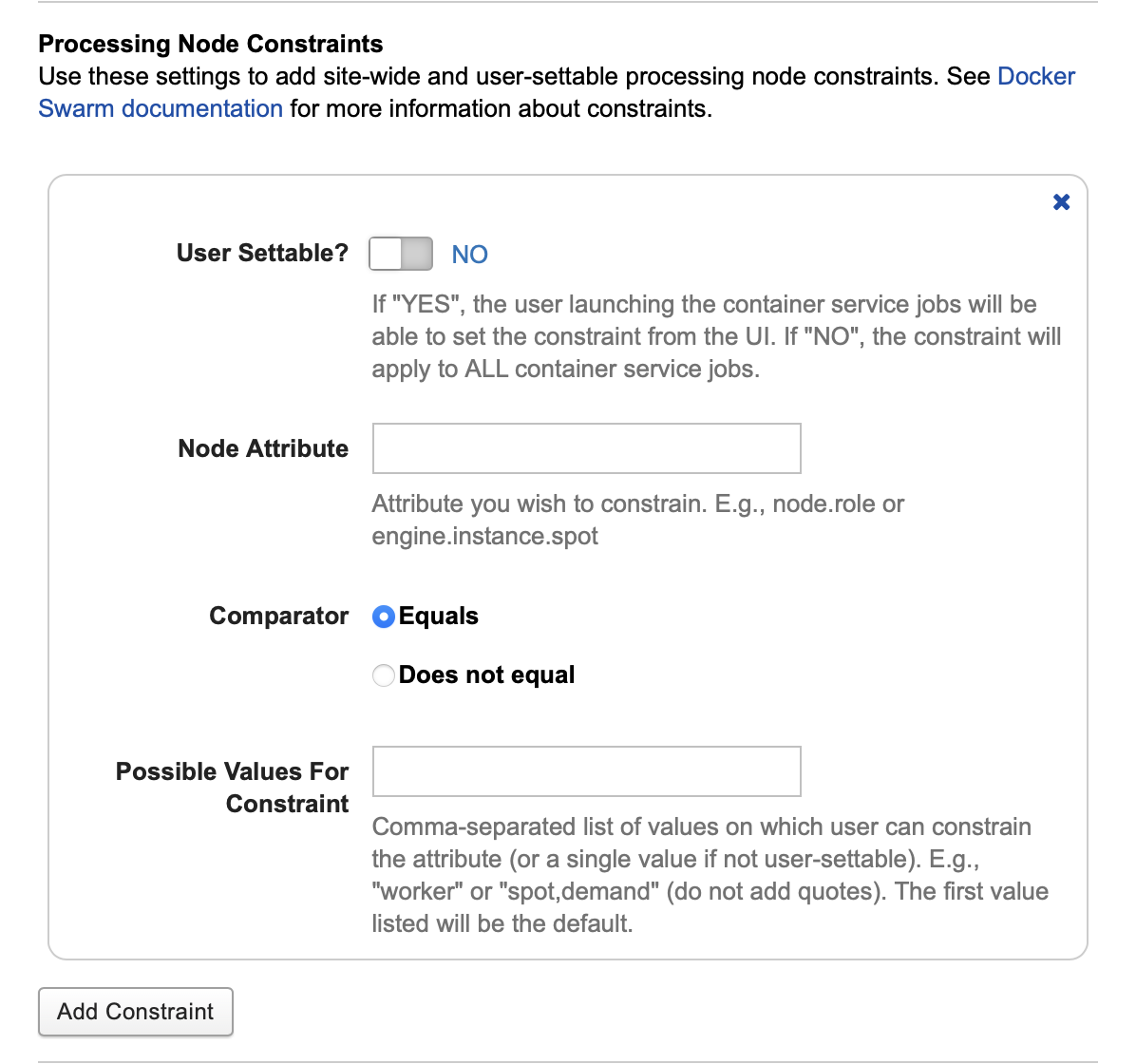

Processing Node Constraints

In Docker Swarm and Kubernetes modes, the Compute Backend must schedule containers onto processing nodes. The Container Service allows you to set Constraints that will influence this backend scheduling behavior.

Note that constraints can only be added for the entire Compute Backend and will apply to all container launches. Their values can be changed per launch, but they cannot be customized per container type.

To add a constraint, click the Add Constraint button in the Processing Node Constraints section.

Setting | Description |

|---|---|

User Settable | If "YES", the user launching the container service jobs will be able to set the constraint value from the User Interface. If "NO", the constraint will apply to ALL container service jobs. |

Node Attribute | Attribute you wish to constrain. On Docker Swarm the allowable values can be node or engine properties; see documentation: Placement Constraints | Deploy Services to a Swarm and Specify service constraints | docker service create CLI documentation. On Kubernetes constraints can only apply to node labels. See Assign Pods to Nodes Using Node Affinity. |

Comparator | Options: "Equals" or "Does not equal". Controls whether the backend will place jobs on nodes that do match the constraint, or do not match the constraint. |

Possible Values for Constraint | A value, or comma-separated list of values. If the constraint is user-settable the values in this list will be the options which they can select. The first value is the default. If the constraint is not user-settable then the first value will always be used. |

Other Settings

You can configure a series of other settings as well to enable or disable certain Container Service behaviors.

Setting | Description |

|---|---|

Re-Pull Images on Init | Optional: Use this setting to force the Docker server to re-pull your images whenever the Apache Tomcat server is restarted. Images are only pulled if they are missing. This setting will not function properly in Docker Swarm or Kubernetes modes, where the concept of "local images" is less meaningful. Default: |

Container User | Optional: By default, Docker will execute container processes running as the "root" user, or allow containers to designate their own user. This field can override those defaults and allow you to specify a valid system user to execute containers. In Docker and Docker Swarm modes the allowed values are user, user:group, uid, uid:gid, user:gid, or uid:group, where "user" and "group" refer to string names and "uid" and "gid" refer to integer ids. In Kubernetes mode the only allowed value is an integer uid. |

Automatically cleanup completed containers | Use this setting to automatically remove completed containers after saving outputs and logs. If you do not use this setting, you will need to run some sort of cleanup script on your server to remove old containers so as not to run out of system resources. Default: |

Container status emails | Send an email to the user who launched the container once it completes Default: |

Kubernetes: GPU Vendor

If you are configuring a Kubernetes backend and you intend to run containers that require a GPU, you must select the vendor. The options are Nvidia or AMD.